PLUS: China's new robot border patrol, the truth about AI-generated ads, and ChatGPT's big flaw

Good morning

A significant vulnerability has been uncovered in ChatGPT's ability to handle scientific information. New research shows the model frequently fails to identify retracted studies, presenting debunked findings as factual.

This flaw goes beyond a simple data-freshness issue, pointing to a core problem in how the model processes source validity. As AI becomes more integrated into research, how do we prevent these systems from becoming widespread distributors of misinformation?

In today’s Next in AI:

ChatGPT's major flaw with retracted science

China deploys advanced humanoid border patrol

A new AI assistant for content teams

How autonomous robots are entering public safety

Google's Memory Boost

Next in AI: Google Research has introduced Titans, a new architecture giving AI models a powerful long-term memory. This approach combines the speed of RNNs with the accuracy of transformers to handle massive amounts of information.

Decoded:

It learns what to remember using a "surprise metric", which flags unexpected information for storage in its long-term memory, mimicking how humans recall novel events.

The architecture can effectively manage a context window of over 2 million tokens, outperforming larger models like GPT-4 on specific long-document reasoning tasks like the BABILong benchmark.

This isn't just one new model; it's part of MIRAS, a broader theoretical blueprint for creating new types of AI that can learn and adapt in real-time without costly retraining.

Why It Matters: This technology solves a major AI bottleneck, allowing models to process entire documents or codebases in one go. The development opens the door for powerful AI assistants that can maintain context and continuity over extremely long conversations and complex tasks.

China Deploys Robot Border Patrol

Next in AI: China is deploying a fleet of advanced humanoid robots from UBTech to patrol its border with Vietnam. The move marks a significant real-world application of autonomous systems in national security.

Decoded:

UBTech Robotics secured a $37 million contract to deploy its Walker S2 humanoids, which can navigate terrain, perceive their surroundings, and even swap their own batteries.

The robots will initially handle tasks like guiding travelers and supporting patrols, with the goal of eventually performing routine surveillance in areas difficult for humans to access.

This project is part of China's broader government-backed push to commercialize AI, with plans to expand the use of these robots into manufacturing and event security.

Why It Matters: This deployment shows how quickly autonomous systems are moving from controlled factory floors to critical public safety roles. It serves as a major test for human-robot collaboration in high-stakes, real-world environments.

The AI Ad Takeover

Next in AI: A new study finds that ads created entirely by generative AI outperform those from human experts, achieving up to a 19% higher click-through rate. The catch? The performance boost disappears if viewers know an AI was involved.

Decoded:

Interestingly, using AI to simply modify an expert's design showed no significant improvement, highlighting the value of using AI for holistic ad creation from the start.

The AI-generated ads succeeded by driving stronger emotional engagement and achieving higher visual fluency, making them more compelling to viewers.

The AI disclosure penalty is steep, as revealing AI involvement in the ad's creation slashed effectiveness by as much as 31.5%.

Why It Matters: This research suggests AI is already a powerful creative tool, but public perception remains a major hurdle. For now, transparency with consumers comes at a significant cost to campaign performance.

ChatGPT's Science Blind Spot

Next in AI: A new study reveals ChatGPT has a major blind spot for bad science, failing to recognize retracted papers and even affirming their discredited claims as true.

Decoded:

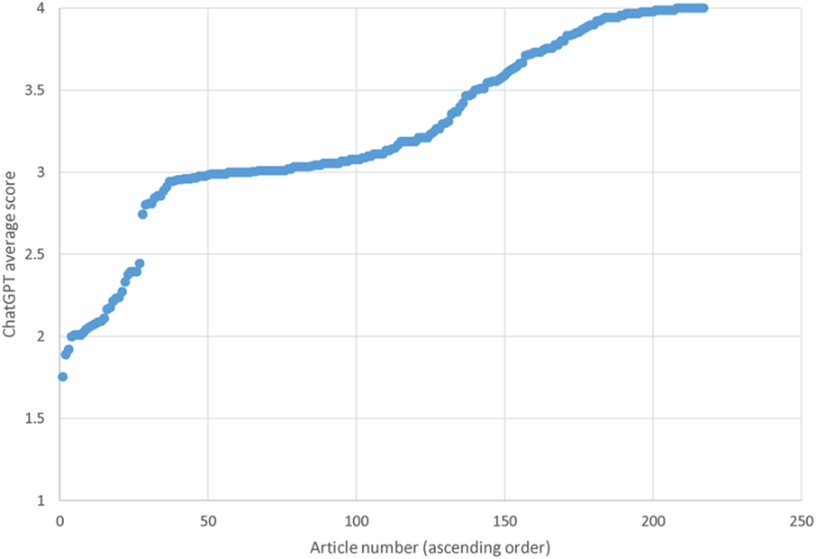

In a test of 217 retracted articles, ChatGPT never once identified a paper's retraction status and praised nearly three-quarters of the flawed papers with high-quality scores.

The model also confirmed debunked claims from these papers as true in about two-thirds of cases, including validating a cheetah species that was based on a forged fossil.

This isn't just an outdated data issue, as most articles were retracted before the model's knowledge cut-off, suggesting a fundamental failure to process retraction notices.

Why It Matters: This critical flaw highlights the ongoing risk of AI amplifying and recirculating zombie research if used without supervision. For now, the responsibility to verify sources and check an article's status remains firmly with the user.

AI Pulse

A study found that ads created entirely by generative AI outperform human-expert ads with a 19% higher click-through rate, but their effectiveness drops by over 30% if users know AI was involved.

Researchers discovered a new jailbreak method called "adversarial poetry," using poetic structures and riddles to bypass safety guardrails on 25 top AI models with a success rate as high as 63%.

Reports reveal OpenAI has declared a "Code Red" and is fast-tracking a new model codenamed "Garlic" in response to competitive pressure from Google's Gemini 3.