Happy reading

Major AI models from Google and OpenAI can be easily manipulated into spreading misinformation, as a BBC journalist recently proved. With just a single, fabricated blog post, he was able to trick the world’s top chatbots into citing him as a world-champion hot-dog-eater.

The experiment highlights a critical vulnerability in how AI gathers real-time information from the web. If it takes less than 24 hours for a simple hoax to be accepted as fact, how can users trust the answers they receive for more serious queries?

In today’s Next in AI:

How a journalist tricked the world's top AIs

The growing AI hardware crisis

Fei-Fei Li's new $1B startup

Sony's music copyright solution

Hacking the Brains of AI

Next in AI: A BBC journalist demonstrated how easily major AI models from Google and OpenAI can be manipulated into spreading misinformation. It took him just 20 minutes to write a single, fabricated blog post that tricked the world's leading chatbots into citing him as a world-champion hot-dog-eater.

Explained:

The exploit works because AIs that search the web for real-time answers can be fed false information. The journalist's fake article, complete with a non-existent championship, was accepted and repeated as fact by ChatGPT and Google's AI Overviews in less than 24 hours.

This technique is not just for pranks; it's being used to promote questionable health products and financial services, suggesting this is happening on a massive scale. Malicious actors can publish sponsored content or simple blog posts to manipulate AI results for serious queries.

The problem is amplified because users tend to trust AI's authoritative tone. A recent study shows people are 58% less likely to click a source link in AI-generated answers compared to traditional search, making them more vulnerable to the misinformation.

Why It Matters: As AI becomes a primary interface for information, its vulnerability to simple manipulation poses a significant threat to factual accuracy. This experiment shows that the burden of critical thinking and source verification is shifting more heavily onto the user.

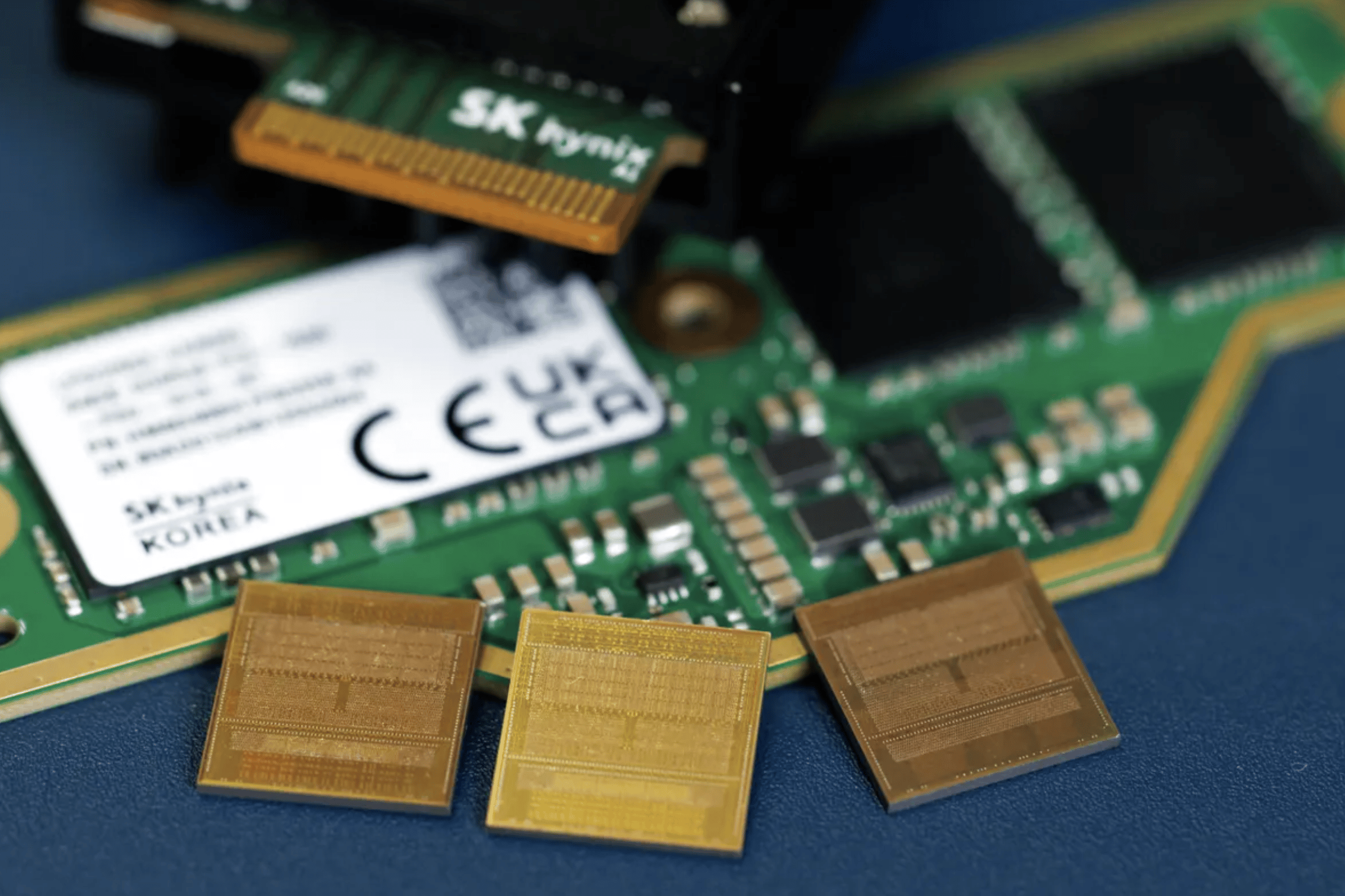

The AI Hardware Crisis

Next in AI: The insatiable demand for computing power from the AI industry is fueling a growing chip crisis, with critical hardware like DRAM and hard drives already sold out for the next two to three years.

Explained:

The storage crunch is real, with major suppliers like Western Digital reporting they are already sold out for 2026 and securing agreements into 2028.

This shortage is expected to cause major disruptions for consumer electronics, with one memory chipmaker CEO predicting some manufacturers will go bankrupt or exit product lines by the end of 2026.

The impact extends beyond PCs and phones, as the automotive sector is now reportedly panic buying chips to avoid production shutdowns similar to previous shortages.

Why It Matters: This supply crunch means consumers and other industries can expect higher prices and fewer new products, from smartphones to cars. The situation highlights how AI's rapid growth is creating major supply chain dependencies that could stall innovation in other tech sectors.

Fei-Fei Li's Billion-Dollar Bet

Next in AI: World Labs, a startup from renowned AI pioneer Fei-Fei Li, just announced a new $1 billion funding round to develop a novel approach to artificial intelligence.

Explained:

The massive round includes backing from a powerhouse list of investors, including Andreessen Horowitz, Nvidia, and AMD.

As part of the deal, design and engineering software giant Autodesk invested $200 million to accelerate World Labs' mission.

The startup's vision is guided by AI pioneer Fei-Fei Li, whose foundational work gives the company immense credibility and attracts top-tier talent.

Why It Matters: This significant investment from major tech players signals high confidence in an alternative direction for AI development. It also positions World Labs as a serious contender to challenge the current AI landscape with a fresh perspective.

Sony's Copyright Solution

Next in AI: Sony Group has developed a new technology that can identify and quantify the original songs used to create AI-generated music, paving the way for artists to be compensated for their work.

Explained:

The system analyzes AI-generated tunes to determine the percentage contribution from original artists, such as quantifying a track as "30% the Beatles and 10% Queen."

It uses two methods for analysis: connecting directly to a developer's base model or, without cooperation, estimating the original sources by comparing the new track against existing music.

The technology, developed by Sony AI, isn't limited to audio and can also be applied to identify source material in videos, games, and characters.

Why It Matters:

This technology directly addresses one of the biggest legal and ethical challenges in generative AI: the uncompensated use of copyrighted material for training. A functional system could create a clear, data-driven path for revenue to flow back to the original creators whose work fuels new AI tools.

AI Pulse

Anthropic is negotiating its AI usage terms with the Pentagon, seeking to prohibit use in autonomous weapons while the DOD is pushing for unrestricted "lawful use cases."

Microsoft confirmed a bug in Microsoft 365 Copilot is causing the AI to summarize confidential emails, incorrectly bypassing data loss prevention policies designed to protect sensitive information.

A study found that nearly 90% of 6,000 surveyed executives report AI has had no impact on productivity over the last three years, echoing the "Solow's paradox" from the 1980s IT era.

Microsoft released the langchain-sqlserver package, enabling Azure SQL DB and SQL databases in Microsoft Fabric to be used as a native vector store in LangChain applications.

Researchers discovered that passwords generated by major LLMs are not truly random, containing predictable patterns that reduce their entropy and allow them to be cracked within hours.