PLUS: Amazon's AI book club, Oracle's OpenAI delay, and a China chip reversal

Good morning

OpenAI is responding to user complaints about its overly cautious models by confirming an "adult mode" for ChatGPT, set to launch in early 2026. The new feature will allow for the generation of more unrestricted content, marking a significant pivot from its current safety-first personality.

This decision aims to give users more freedom, but it also walks a fine line between open expression and responsible AI interaction. As OpenAI prepares to split its user base between "safer" and unrestricted experiences, can it successfully navigate the safety and ethical risks that come with more personalized chatbots?

In today’s Next in AI:

OpenAI to launch an uncensored ChatGPT mode

Amazon's AI book club raises author concerns

Oracle denies reported OpenAI data center delays

US reverses ban on advanced chip sales to China

ChatGPT After Dark

Next in AI: OpenAI confirmed it will launch an adult mode for ChatGPT in Q1 2026. The move responds to user feedback about the model's neutered personality and will allow for generating more unrestricted content, including erotica.

Decoded:

This decision stems from user complaints after GPT-5 was updated with a more restrained personality, a change prompted by safety concerns following a wrongful death lawsuit linked to the chatbot.

The feature will rely on new age verification technology to create a split experience, filtering users into either a standard "safer" version or the less restrictive adult mode.

While the goal is to give users more freedom, OpenAI acknowledges the risk of users becoming emotionally reliant on personalized chatbots, raising new questions about responsible AI interaction.

Why It Matters: OpenAI is walking a fine line, attempting to satisfy user demands for fewer restrictions while navigating complex safety issues. This move signals a broader industry trend toward user customization and the growing challenge of managing human-AI relationships.

Amazon AI Book Club

Next in AI: Amazon's Kindle app now features "Ask this Book," an AI chatbot that answers your questions about the book you're reading, but it was launched without an opt-out for authors or publishers.

Decoded:

The feature acts as an in-book chatbot, providing spoiler-free answers to questions about plot, characters, and themes directly within the reading experience.

Amazon confirmed that creators cannot opt out of the feature, raising immediate concerns among authors and publishers about copyright and derivative works.

This rollout follows other recent issues with Amazon's AI, including the company pausing its Prime Video AI recaps after a feature for the show Fallout was filled with mistakes.

Why It Matters: Amazon is pushing AI-driven convenience directly to consumers, even when it creates friction with content creators. This move highlights the growing conflict over how companies use copyrighted material to power their AI features.

The AI Buildout Hits a Snag

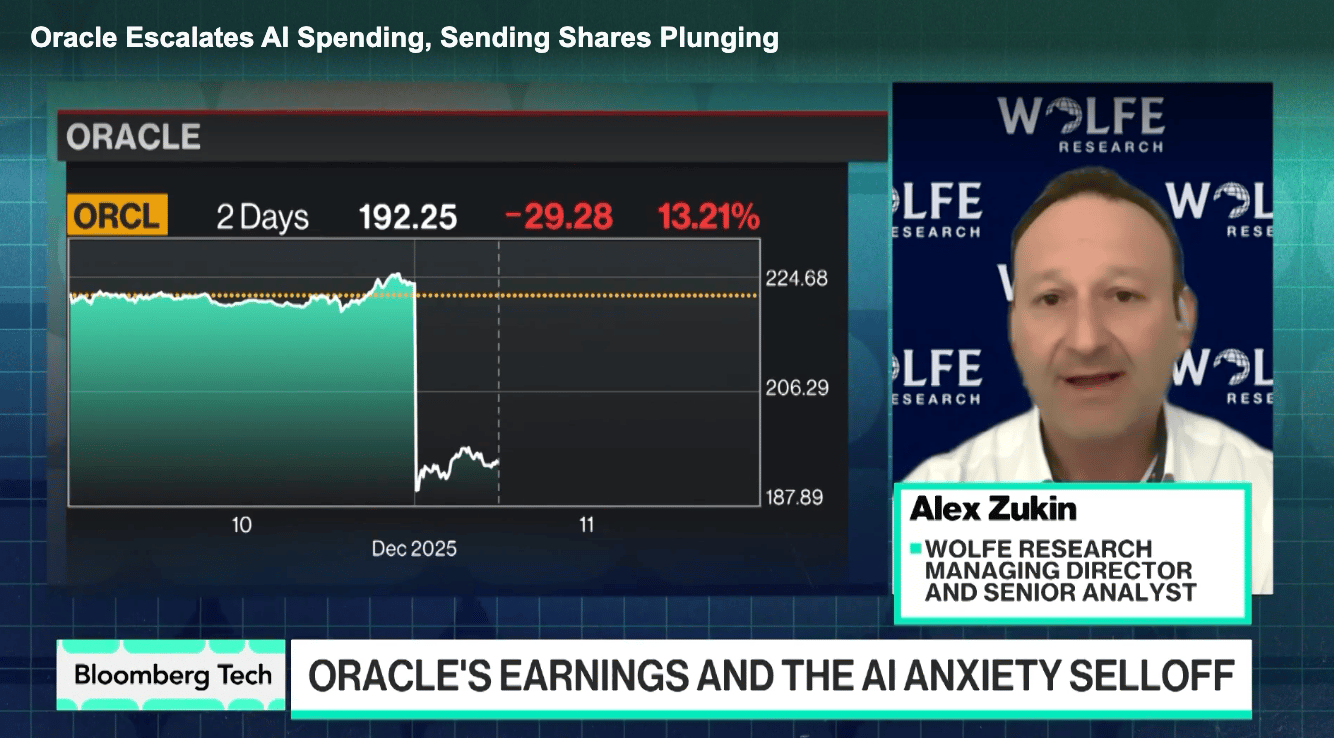

Next in AI: A report alleging Oracle delayed data centers being built for OpenAI sent a chill through AI stocks, highlighting the market's growing sensitivity to the real-world costs of scaling AI, even as Oracle publicly denied the claim.

Decoded:

The news triggered a notable sell-off in AI-related stocks, with Nvidia and Broadcom tumbling alongside Oracle as investors grew nervous about infrastructure bottlenecks.

Oracle quickly issued a denial, stating there have been “no delays” to any sites required to meet its contractual commitments with OpenAI and that all milestones remain on track.

The incident shows that investor concerns are expanding beyond just chips to include physical infrastructure challenges like labor shortages, power availability, and construction logistics.

Why It Matters: The market's sharp reaction signals a shift from pure hype to a more sober assessment of AI's massive infrastructure demands. Physical world constraints are becoming just as important as computing power in the race to scale artificial intelligence.

The Chip War's New Chapter

Next in AI: Just days after the Trump administration approved sales of Nvidia’s H200 AI chips to China, Beijing appears to be slamming the door shut. White House AI czar David Sacks says China may now be actively rejecting the chips, choosing self-reliance over access to U.S. hardware.

Decoded:

China is reportedly discouraging domestic firms from buying the H200, requiring justification for why local alternatives cannot be used, in order to protect Huawei and accelerate semiconductor independence.

While newly approved, the H200 is already considered lagging technology, roughly 18 months behind Nvidia’s Blackwell and Rubin chips that remain banned from export, limiting its strategic value.

Sacks warns the approach may be backfiring: after years of U.S. restrictions that constrained Chinese AI development, Beijing now appears willing to forgo Nvidia’s chips entirely to avoid long-term dependence on U.S. technology.

Why It Matters: This marks a shift from U.S.-driven restrictions to mutual decoupling. If China refuses even approved American chips, the AI arms race accelerates toward two separate compute ecosystems, potentially locking in a permanent split between U.S. and Chinese AI stacks and reshaping the balance of power in global AI.

AI Pulse

Palantir sued the CEO of rival firm Percepta, alleging the company—founded by ex-Palantir employees—engaged in a widespread effort to poach staff and built a "copycat" business.

Researchers found that top LLMs consistently fail to distinguish between objective facts and subjective beliefs, often refusing to acknowledge a user's stated false belief and attempting to correct it instead.

Trump signed an executive order blocking states from enforcing their own AI regulations, aiming to create a single national framework to prevent a patchwork of rules from slowing innovation.

Chandler rejected a proposed AI data center after significant community opposition, highlighting the growing local resistance to the massive power and resource demands of the AI infrastructure buildout.