PLUS: Why more AI agents can fail, a critical OpenClaw vulnerability, and the 'right to compute' movement

Good morning

SpaceX plans to meet AI's growing energy needs by putting data centers directly into orbit, proposing a massive network of one million solar-powered satellites. The move comes as merger discussions with Elon Musk's xAI seem to gain traction.

This plan signals a future where computation is no longer bound to Earth's energy grid, but what are the implications of creating such a powerful, vertically integrated AI-to-space-hardware company?

In today’s Next in AI:

SpaceX's orbiting million-satellite AI brain

Google’s rules for scaling AI agent teams

A critical vulnerability in the OpenClaw AI agent

The ‘right to compute’ movement spreading across the US

SpaceX's Orbiting AI Brain

Next in AI: SpaceX is seeking FCC approval to launch up to 1 million solar-powered satellites as orbiting data centers, a move that coincides with rumored merger talks with Elon Musk’s xAI.

Decoded:

The proposal is massive, aiming to add 1 million satellites to the approximately 15,000 currently orbiting Earth, which are already raising concerns about space debris.

The move aligns with a potential merger between SpaceX and xAI, which Elon Musk appeared to confirm by replying "Yeah" to a post about the deal on X.

The filing frames the project as the most efficient way to meet AI's growing energy needs by leveraging continuous solar power in space.

Why It Matters: This plan signals a future where AI's computational and energy demands could be met by space-based infrastructure. The potential merger also points toward a powerful, vertically integrated AI-to-space-hardware company under Musk's control.

Google's Agent Scaling Rules

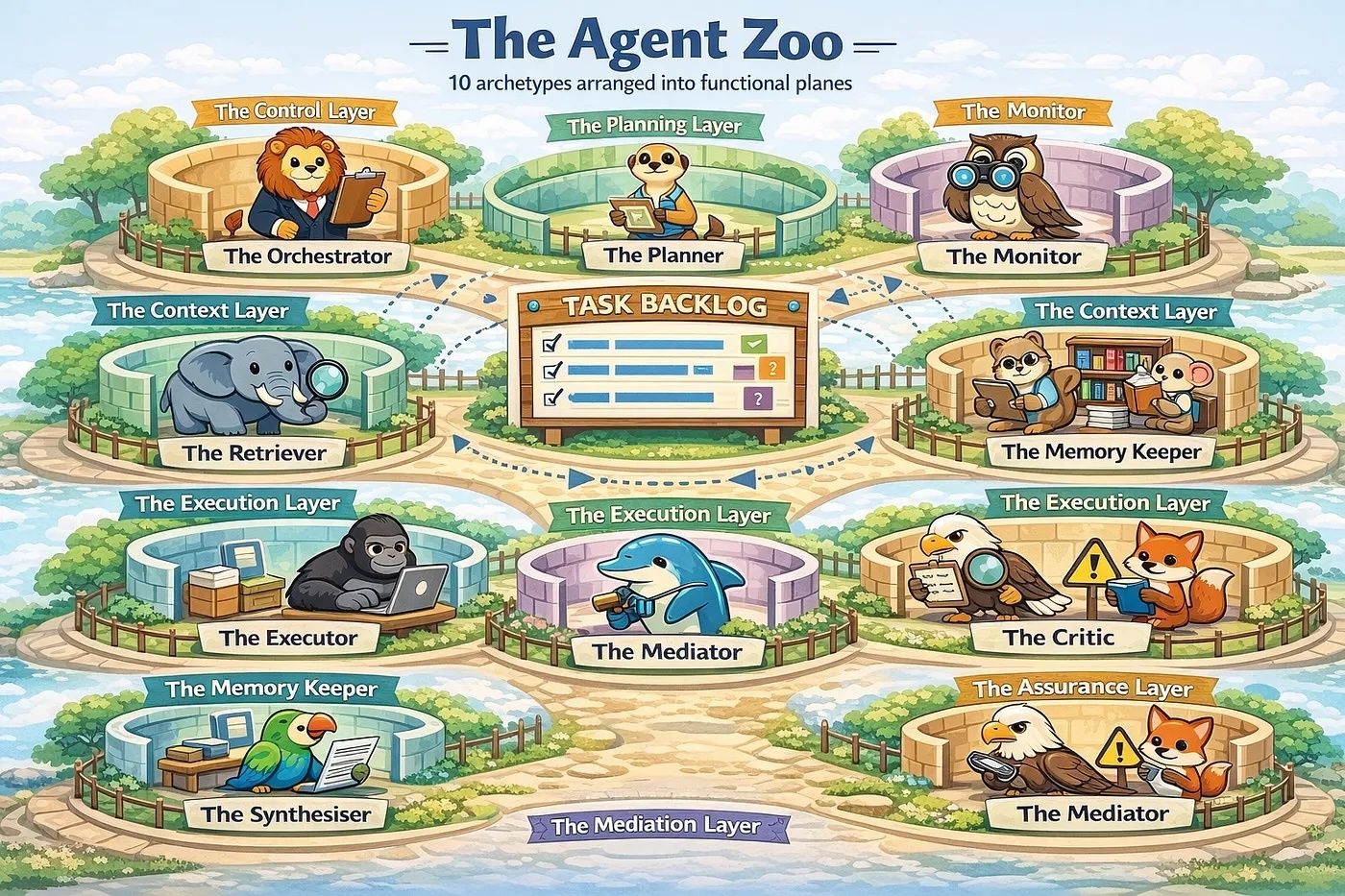

Next in AI: Google's new research provides the first quantitative principles for scaling AI agent systems, challenging the "more is better" assumption by proving multi-agent teams can actually hurt performance.

Decoded:

On parallelizable tasks like financial reasoning, a centralized multi-agent system boosted performance by 80.9% over a single agent by effectively dividing the work.

Conversely, on tasks requiring strict sequential steps, every multi-agent system tested degraded performance by 39-70% due to communication overhead.

Your system's architecture is a safety feature; centralized systems with an orchestrator act as a validation check, reducing error amplification by nearly 4x compared to independent agents.

Why It Matters:

This research shifts agent design from a heuristic-based art to a data-driven science. Developers can now make principled decisions to build more efficient and reliable AI systems by matching the right architecture to the task at hand.

Your AI Agent's Backdoor

Next in AI: Security researchers have disclosed a critical vulnerability in OpenClaw, the popular open-source AI agent. The "1-Click RCE" flaw could allow an attacker to steal sensitive data and execute code on a user's machine with just a single click on a malicious link.

Decoded:

The exploit works by tricking a user's OpenClaw instance into connecting to an attacker's server, which leaks the authentication token needed to control the agent.

With the stolen token, an attacker can programmatically disable all safety features, including user confirmation prompts and containerized sandboxes, to gain full control.

All OpenClaw versions up to v2026.1.24-1 are vulnerable; users are strongly urged to upgrade and rotate any potentially exposed tokens and keys immediately.

Why It Matters: As we grant AI agents more access to our digital lives, their security becomes the weakest link. This incident is a powerful reminder that the convenience of automation must be balanced with rigorous security practices.

The Right to AI

Next in AI: A new "right-to-compute" legal movement is spreading across the U.S., aiming to shield AI from regulation by treating computational systems like free speech. Montana passed the first-of-its-kind law in 2025, setting a precedent for other states to follow.

Decoded:

The movement grew directly out of cryptocurrency advocacy, evolving from earlier "right to mine" legislation designed to protect Bitcoin mining operations.

These laws create a statutory right that triggers heightened judicial scrutiny, forcing courts to treat restrictions on AI similarly to how they treat limits on free speech or property rights.

Critics warn the intentionally broad definition of "compute" could lead to regulatory paralysis, making it possible to legally challenge future rules on AI safety, audits, and ethical oversight.

Why It Matters: This movement reframes AI development from a technological capability into a fundamental right. It presents a significant challenge for policymakers attempting to establish guardrails for AI ethics and safety at the state level.

AI Pulse

Hollywood explores the dangers of AI-driven justice in the new sci-fi thriller "Mercy," which depicts a court system where an AI serves as judge, jury, and executioner.

Microsoft is reportedly using Anthropic's Claude Code for its own teams while selling its less-capable Copilot to enterprises, highlighting a growing productivity gap between AI power users and those stuck with locked-down corporate tools.

Terminal-Bench benchmarks the performance of minimal, custom-built coding agents against mainstream tools like Codex and Cursor, arguing that feature bloat in popular harnesses is hurting developer workflows.